In-Memory Computing: Bringing Memory and Processing Together

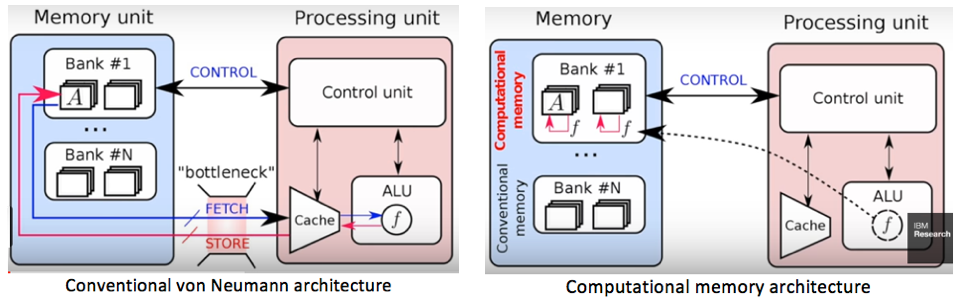

One of the biggest bottlenecks in today’s computers isn’t how fast they can calculate — it’s how often they have to move data between the processor and the memory. This constant back-and-forth, known as the von Neumann bottleneck, wastes time and energy.

In-memory computing aims to solve this by doing something radically different:

💡 Perform the computation directly where the data is stored.

Why Moving Data is a Problem

In a traditional computer:

- Data is stored in memory (RAM, cache, or storage).

- The processor fetches the data from memory.

- The processor processes the data.

- Results are sent back to memory.

For small tasks, this works fine. But modern AI workloads deal with billions of data points. Fetching and writing data over and over wastes huge amounts of energy.

How In-Memory Computing Works

Instead of separating memory and logic:

- Memory cells (like memristors, phase-change memory, MRAM, or ReRAM) are designed to store data and also perform operations.

- Common tasks, like multiplication and addition for neural networks, can happen inside the memory array.

- This reduces data movement, making computation faster and far more energy-efficient.

Why It’s Important for Neuromorphic Computing

Neuromorphic chips mimic the brain’s neuron–synapse structure. In biology:

- Neurons process signals.

- Synapses both store connection strength and influence signal transmission.

In-memory computing is the hardware counterpart to that idea — the “synapse” stores and processes at the same location. This:

- Reduces latency.

- Cuts power consumption.

- Enables real-time processing in AI and edge devices.

ReRAM offers high-density non-volatile storage and the potential for efficient in-memory computing, while ReRAM-enabled accelerators can mitigate the von Neumann bottleneck.

ReRAM (ReRAM/Memristor)-Based In-Memory Computing

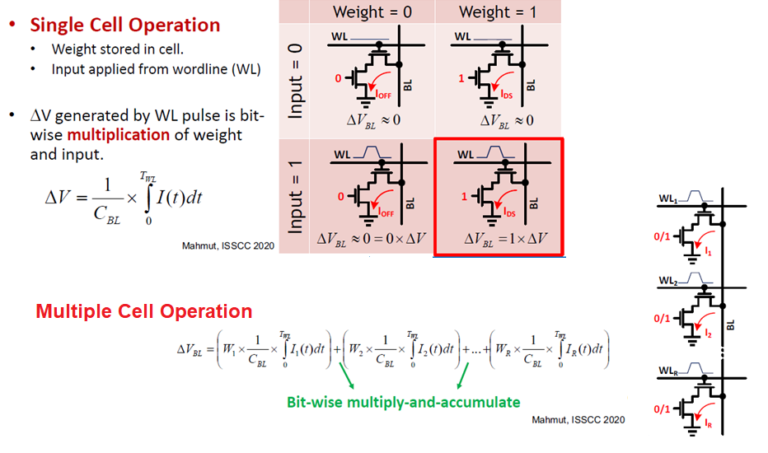

What it is: Resistive RAM (ReRAM) — often implemented with memristive devices — stores information as different resistance states. By applying voltages across crossbar arrays, large numbers of multiply–accumulate (MAC) operations can be performed in parallel using Ohm’s and Kirchhoff’s laws. ReRAM offers high-density non-volatile storage and the potential for efficient in-memory computing, while ReRAM-enabled accelerators can solve the von Neumann bottleneck

- Strengths: Non-volatile, high density, analog MAC with massive parallelism, low data movement.

- Design notes: Requires ADC/DAC interfaces, write-verify for precise weights, and techniques for variability/noise compensation.

- Best for: Inference accelerators, edge AI with tight energy budgets, sparse/quantized models.

- Challenges: ReRAM devices face several notable challenges. First, implementing high-precision analog-to-digital converter (ADC)–based readout circuits is particularly difficult. Second, performance can be hindered by device non-idealities, such as variations between cells. Finally, the nonlinear and asymmetric conductance updates observed in ReRAM devices can significantly degrade training accuracy.

- Solutions: binary neural networks and Multi-range quantization adresse the first challenges. Mixed-precision training can address second and third challenges.

Review of memristor devices in neuromorphic computing — Li et al., J. Phys. D, 2018.

SRAM-Based In-Memory Computing

What it is: Digital/bitline compute primitives are embedded into standard SRAM arrays (e.g., 6T/8T/10T cells) to perform operations like bitline summation, XNOR–popcount, and approximate MACs without moving data to external ALUs.

- Strengths: CMOS compatibility, mature tooling, deterministic digital behavior, easier integration with existing SoCs.

- Design notes: Limited analog precision per cycle; often pairs well with quantization (e.g., binary/ternary networks) and controller-side accumulation.

- Best for: Low-latency on-chip accelerators, vision pipelines, and tightly-coupled ML kernels.

SRAM-based computing

Quick Comparison: ReRAM vs. SRAM for In-Memory Compute

- Volatility: ReRAM is non-volatile; SRAM is volatile.

- Precision: ReRAM often favors analog MAC with ADC/DAC; SRAM favors digital/bitwise ops.

- Density & retention: ReRAM typically higher density with retention; SRAM faster access but larger cell area.

- Integration: SRAM slots into standard CMOS flows; ReRAM may require BEOL integration and device calibration.

Optimization

Designing neural network architectures that can leverage in-memory computing requires optimizing for multiple objectives like power, latency, and accuracy.

Putting In-Memory Computing Togther with a NoSQL DB

No One-Size-Fits-All Solution. Putting our entire data purely in-memory can be too costly, especially for data that were not going to access frequently.

- The Challenge: Complexity associated with synchronising two separate data systems.

- The Solution: Have an implicit plug-in.

Real-World Examples

- IBM is exploring in-memory computing for AI accelerators.

- Intel’s Loihi uses concepts similar to in-memory processing for neuromorphic tasks.

- Research labs are building memristor-based arrays that can perform matrix multiplication inside memory.

2D Materials in In-Memory Computing

Recent research has explored the use of two-dimensional (2D) materials, such as graphene, transition metal dichalcogenides (TMDs), and hexagonal boron nitride, in developing next-generation in-memory computing devices. These materials offer exceptional electrical, optical, and mechanical properties, enabling ultra-thin, high-density, and energy-efficient memory arrays. Their atomic-scale thickness allows for precise control over charge transport, making them promising candidates for scalable neuromorphic hardware.

Studies have evaluated various 2D memory technologies for performance, endurance, and compatibility with in-memory processing architectures, showing strong potential for both AI acceleration and edge computing applications.

Reference: Sun, Yibo; Wang, Shuiyuan; Zhang, Qiran; Zhou, Peng. Evaluation of different 2D memory technologies for in-memory computing, Device, 2(12), 2024. Elsevier.